Consider the following scenario where you have two physical sites, production and failover. Each site includes a HyperV host and a SAN. The production environment is a HyperV Failover Cluster while the Failover site consists of a standalone HyperV host. The SAN volumes are replicated from production to the failover site as shown in the table below.

| Production Site | Failover Site |

| PRODHYPERV1 PRODSAN1 VM Location: c:\ClusterStorage\VMName |

BACKUPHYPERV1 BACKUPSAN1 VM Location: E:\VMs |

You want to perform a failover test.

You now have all of the raw VM files from production SAN accessible in your failover site but the Hyper-V host doesn’t know about the VMs yet. You try to use Import-VM but receive the error:

Import-VM : Failed to import a virtual machine.

An unexpected error occurred: Catastrophic failure (0x8000FFFF).

Failed to import a virtual machine.

The Hyper-V Virtual Machine Management service encountered an unexpected error: Catastrophic failure (0x8000FFFF).

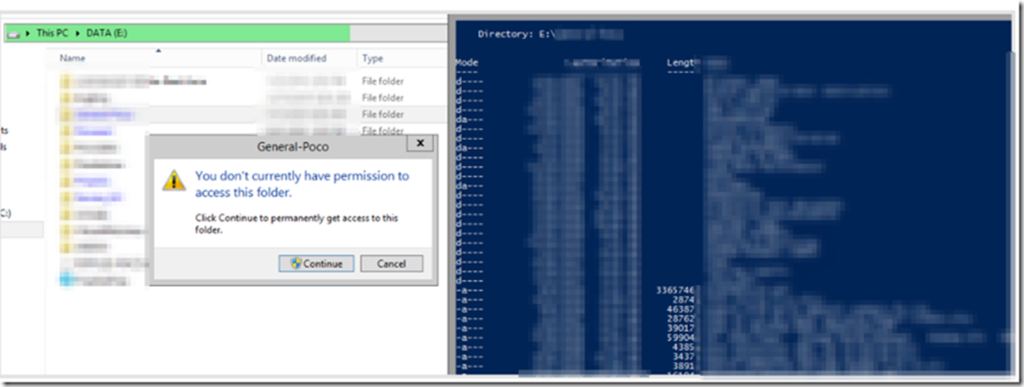

What’s happening? The VM folder contains the VMCX file that points to the file path where the data resides. Because you just brought up the volume from a different site, these are all pointing to c:\clusterstorage\[VMName]. Because your failover site does not have this path, the import fails.

To fix this you need to update the VMCX file with the filepath appropriate for the failover HyperV host. Unfortunately you can’t just edit the VMCX file in Notepad as it’s binary so you want to do the following instead:

|

1 2 3 4 |

$report = Compare-VM -Path 'D:\VMNAME1\Virtual Machines\310E30DE-F8B0-44DA-A9E9-062027CC93D1.vmcx' $report.Incompatibilities.Source | Format-Table $report.Incompatibilities[0].Source | Set-VMHardDiskDrive -Path 'D:\VMNAME1\vmname1.vhdx' Import-VM -CompatibilityReport $report |

- Use Compare-VM to save the current confirmation of the VMCX file into a PowerShell object

- View the current folder paths configured by looking at $report.incompatibilities.source property

- Note it is pointed at c:\clusterstorage.

- Update the path using the Set-VMHardDiskDrive syntax shown above to update the powershell object

With this newly updated Powershell object and its corrected paths, you can now import the VM using Import-VM but this time using CompatibilityReport parameter and pass in the object you modified.

The VM should now import successfully and boot normally.